It allows us to soften the requirement for discrete inputs, and instead use a linear combination of tokens as input to the model. To still be able to use Deep Dream, we have to utilize the so-called Gumbel-Softmax trick, which has already been employed in a paper by Poerner et. With Deep Dream changing the embeddings rather than input tokens, we can end up with embeddings that are nowhere close to any token. You might ask: "Why can't we use these embeddings to dream to?" The answer is that there is often no mapping from unconstrained embedding vectors back to real tokens. In this embedding space, words with similar meanings are closer together than words with different meanings. Using these embeddings, words are converted into high-dimensional vectors of continuous numbers. Language models operate on embeddings of words. This way, we get an idea about which part of the network is looking for what kind of input. The ideas of feature visualization are borrowed from Deep Dream, where we can obtain inputs that excite the network by maximizing the activation of neurons, channels, or layers of the network. Whenever such breakthroughs in deep learning happen, people wonder how the network manages to achieve such impressive results, and what it actually learned.Ī common way of looking into neural networks is feature visualization. This novel model has brought a big change to language modeling as it outperformed all its predecessors on multiple different tasks. It can be used for multiple different tasks, such as sentiment analysis or next sentence prediction, and has recently been integrated into Google Search.

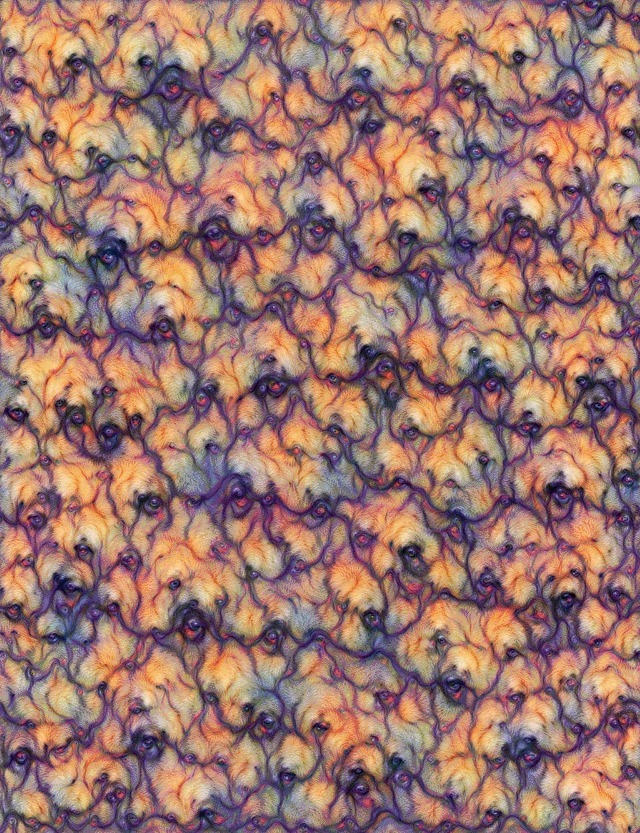

A visual investigation of nightmares in Sesame Street by Alex Bäuerle ( and James Wexler ( of PAIR.īERT, a neural network published by Google in 2018, excels in natural language understanding.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed